Beyond the OEM Manual: A Self-Correcting AI for Real-World RUL

An OEM manual says your motor lasts 13 years. But has it had an easy life or a hard one? This report details a modern, self-correcting AI strategy that doesn't need the full history. Discover how a hybrid model uses live sensor data to uncover an asset's true health and deliver a RUL forecast that adapts to the real world.

Beyond the OEM Manual: A Self-Correcting AI for Real-World RUL

Executive Summary

This report addresses the specific challenge of creating a predictive model for a 10-year-old motor with an OEM lifespan of 13 years, using only the last year of available data. This scenario is common and requires a departure from traditional supervised learning methods which rely on extensive historical data covering multiple failure cycles.

Key Innovation: The proposed strategy is a phased approach that acknowledges the motor's late-life status and the limited data window. The key is to focus on modeling the motor's current state of health and detecting deviations from this baseline, rather than trying to predict the full RUL from first principles.

Value Proposition: This approach provides immediate value through anomaly detection and can be evolved into a more sophisticated prognostic tool.

The Core Challenge: Unknown History and a Shifting Baseline

The primary difficulties in this case are:

Unknown Degradation History: We have no data for the first nine years of operation. We cannot know what stresses the motor has endured or its precise level of wear. We must assume it is not in a "as new" condition.

Shifting Baseline of "Normal": The operational signature of a 10-year-old motor is inherently different from that of a new motor. Bearings will have some wear, and insulation may be slightly degraded. The available one year of data represents this "aged normal," not a pristine baseline.

Data Insufficiency for RUL Models: One year of data from a single asset with no failure event is insufficient to train a supervised model to predict the remaining three years of life.

Proposed Strategy: A Phased, Data-Efficient Approach

The solution is to build a model of the asset's current behavior and use it to detect future changes. This provides actionable insights without needing the full nine years of missing data.

Model the Baseline: Use the available one year of feature data (as extracted via the edge processing pipeline) to train an unsupervised anomaly detection model (e.g., Isolation Forest, Autoencoder, or a Gaussian Mixture Model).

How It Works: This model learns the multi-dimensional signature of the motor's operation over the past year, including its response to different loads, speeds, and temperatures. This learned signature becomes the reference for its "current normal" state of health.

Immediate Value: The model is deployed in real-time. Any new data point that deviates significantly from this learned baseline triggers an anomaly alert. This will not predict the RUL in years, but it will immediately flag if the motor's condition begins to degrade faster than it has in the past year. It answers the question: "Is the health of my motor changing for the worse right now?"

Change-Point Detection: Implement statistical methods like the Cumulative Sum (CUSUM) control chart or Bayesian Change-Point Detection on the anomaly scores produced by the Phase 1 model. This technique is specifically designed to detect a persistent shift in the motor's behavior, indicating that a new, more degraded state has been reached.

Short-Term Trend Analysis: Once an anomaly is detected and persists, you can analyze the trend of the underlying features (e.g., bearing fault frequency amplitude). A consistently increasing trend in a key fault feature, even over a few weeks, is a strong indicator of an accelerating fault and provides a qualitative, short-term RUL warning.

Physics-Informed Modeling: This is the most robust approach for this scenario. We can use a general physics-based degradation model (e.g., a standard bearing wear curve or an exponential model for insulation breakdown). This model represents a "typical" 13-year lifespan.

Anchor with Real Data using Particle Filtering: The general model is treated as a prior belief. We then use the one year of real feature data to update and correct this curve using a Particle Filter. The algorithm essentially asks: "Given this real-world data from year 9-10, where on this typical 13-year degradation curve is this specific motor most likely to be?"

Prognostic Value: This provides a probabilistic RUL estimate. It might determine, for example, that based on the last year's data, there is a 70% chance the motor is behaving like a typical 10.5-year-old motor and has ~2.5 years of life left, but a 10% chance it's already at a 12-year degradation level with only 1 year left. This is a powerful tool for risk-based maintenance planning.

Conclusion: Will This Work?

Yes, this strategy is not only feasible but is the standard best-practice for dealing with late-life assets and limited data.

Key Factors for Success:

- Focus on Change Detection: The strategy wisely focuses on detecting change relative to the recent past rather than making absolute predictions about the far future based on an unknown history.

- Leveraging the "Wear-Out" Phase: The motor is in the most interesting part of its life. Faults are more likely to initiate and accelerate, meaning the one year of data is more likely to contain subtle degradation signals than data from years 1-2.

- Data-Efficient Models: Unsupervised models and statistical process control methods are designed to work with limited data and do not require labeled failure examples to provide value.

Risks and Mitigations:

- Risk: The motor is already in a state of rapid failure. The one year of data might not represent a stable baseline but an active, ongoing failure.

- Mitigation: This is precisely what the anomaly detection model will reveal. If a large portion of the initial "baseline" data is already anomalous, it's a clear signal that the asset requires immediate inspection, bypassing the need for a long-term RUL.

- Risk: The data is not sufficiently rich. If the extracted features do not have a strong correlation with the motor's health, the model will be ineffective.

- Mitigation: This underscores the importance of the edge processing step. Ensure that the features being extracted are based on proven MCSA principles (e.g., amplitudes at specific bearing fault frequencies) and not just generic statistical metrics.

High-Level Implementation in Python (Principle Outline)

import numpy as np

# 1. DEFINE THE MODELS (THE "PLUGGABLE BRAINS")

def system_model(health_state, dt=1, daily_decay_rate=0.00034):

"""Predicts the next health state based on the physics of aging."""

predicted_health = health_state - (daily_decay_rate * dt)

# Add a small amount of random noise to represent process uncertainty

noise = np.random.normal(0, 0.1)

return predicted_health + noise

def measurement_model(measurement, health_state):

"""Calculates the likelihood of a measurement given a particle's health."""

# Define relationship between health and sensor values

# These constants A,B,C,D must be derived from data or expert knowledge

expected_bfa = (10 / health_state) + 0.05

expected_temp = 0.1 * (100 - health_state) + 1.0

# Calculate probability of actual measurement given the expected value

error_bfa = measurement['bfa'] - expected_bfa

likelihood_bfa = np.exp(-0.5 * (error_bfa / 0.02)**2) # Using a Gaussian PDF

error_temp = measurement['temp'] - expected_temp

likelihood_temp = np.exp(-0.5 * (error_temp / 0.5)**2)

return likelihood_bfa * likelihood_temp + 1e-99 # Return combined weight

# 2. THE GENERIC PARTICLE FILTER CLASS

class ParticleFilter:

def __init__(self, num_particles, initial_estimate, initial_uncertainty):

self.num_particles = num_particles

self.particles = np.random.normal(initial_estimate, initial_uncertainty, num_particles)

self.weights = np.ones(num_particles) / num_particles

def predict(self, system_model_func):

"""Move all particles forward according to the system model."""

self.particles = system_model_func(self.particles)

def update(self, measurement, measurement_model_func):

"""Update weights based on how well particles explain the measurement."""

self.weights = measurement_model_func(measurement, self.particles)

self.weights /= np.sum(self.weights) # Normalize weights

def resample(self):

"""Reproduce particles based on their weights."""

indices = np.random.choice(np.arange(self.num_particles), self.num_particles, p=self.weights)

self.particles = self.particles[indices]

self.weights.fill(1.0 / self.num_particles)

def get_estimate(self):

"""Return the weighted average of all particles as the best estimate."""

return np.average(self.particles, weights=self.weights)

# 3. MAIN SIMULATION

if __name__ == "__main__":

# --- Configuration ---

MOTOR_AGE_YEARS = 10

DECAY_RATE_LAMBDA = 0.124

initial_health = 100 * np.exp(-DECAY_RATE_LAMBDA * MOTOR_AGE_YEARS)

# --- Initialization ---

pf = ParticleFilter(num_particles=5000, initial_estimate=initial_health, initial_uncertainty=3.0)

# --- Daily Monitoring Loop ---

# for day in range(365):

# pf.predict(system_model)

# todays_measurement = get_data_from_edge_device()

# pf.update(todays_measurement, measurement_model)

# estimated_health = pf.get_estimate()

# print("Day {}: Estimated Health = {:.2f}%".format(day+1, estimated_health))

# pf.resample()

Supporting Research and References

-

Lv, Y., Zheng, P., et al. (2023). A Predictive Maintenance Strategy for Multi-Component Systems... This paper's use of a particle filter to combine a degradation model with real-time sensor data is directly applicable to the Phase 3 strategy, providing a method to handle the unknown history.

https://www.mdpi.com/2227-7390/11/18/3884 -

Cabo, A., et al. (2021). Unsupervised Anomaly Detection for Wind Turbine Condition Monitoring. Energies. This paper, while focused on turbines, demonstrates the methodology of using unsupervised learning (Autoencoders) to model a complex asset's normal behavior and detect deviations, which is the core of the Phase 1 strategy.

https://www.mdpi.com/1996-1073/14/19/6220 -

Siahpour, S., et al. (2021). A Novel Unsupervised Approach for Remaining Useful Life Estimation of Bearings. IEEE Transactions on Industrial Electronics. This research showcases an unsupervised approach for RUL that does not rely on run-to-failure data, using concepts like Self-Organizing Maps to identify and track degradation states. This supports the idea that value can be derived without extensive labeled historical data.

https://ieeexplore.ieee.org/abstract/document/9360879

Related articles

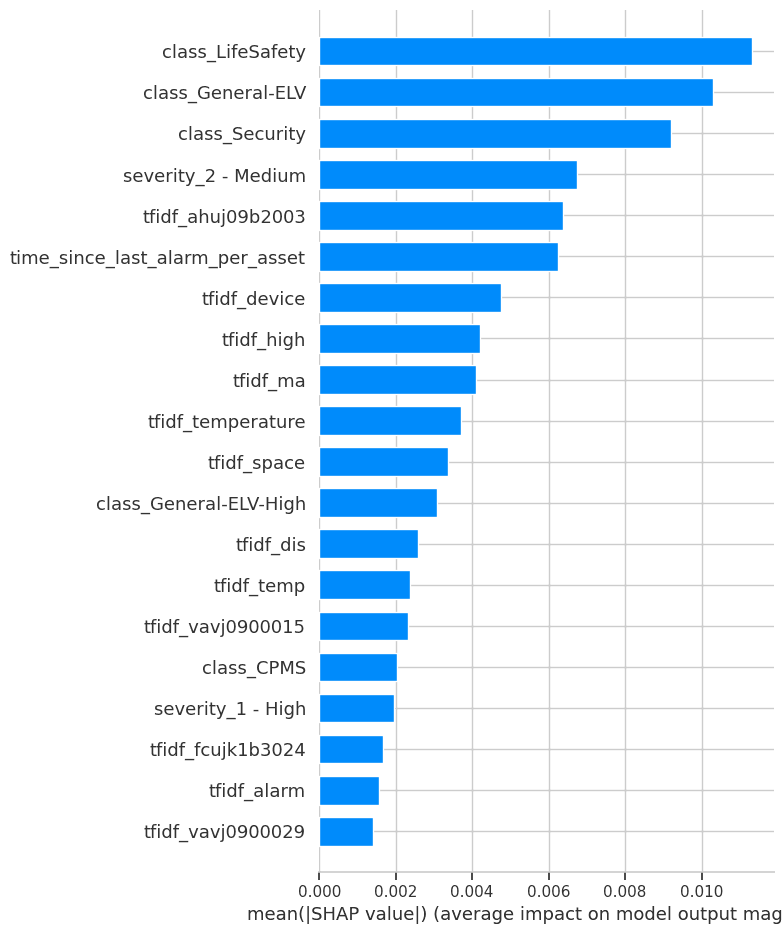

Project Report: Symptomatic Alarm Pattern Discovery and Root Cause Analysis

A formal summary of Project ID a496e3ae-5149-4a15-86dd-a3aee47f493f. This report details the full execution of the project, from the analysis of 102,319 alarm records to the development of an analytical pipeline for anomaly detection and the strategic vision for future capabilities.

The Prognostics & RUL Cheat Sheet: A Guide for Real-World Assets

Ready to move from theory to reality with predictive maintenance? The first step is choosing the right RUL model—a choice that depends entirely on your data. This comprehensive cheat sheet demystifies the options, detailing four key methodologies.

Ready to get started with ML4Industry?

Discover how our machine learning solutions can help your business decode complex machine data and improve operational efficiency.

Get in touch