The Prognostics & RUL Cheat Sheet: A Guide for Real-World Assets

Ready to move from theory to reality with predictive maintenance? The first step is choosing the right RUL model—a choice that depends entirely on your data. This comprehensive cheat sheet demystifies the options, detailing four key methodologies.

The Prognostics & RUL Cheat Sheet: A Guide for Real-World Assets

1. The Business Imperative: Why Invest in Prognostics?

Predictive Maintenance (PdM) is not just a technical upgrade; it's a fundamental business strategy. The ultimate goal of prognostics—forecasting the Remaining Useful Life (RUL) of an asset—unlocks significant business value by transforming maintenance from a cost center into a strategic advantage.

The Core Value Proposition:

Drastically Reduce Unplanned Downtime: Unplanned downtime is the single largest source of lost revenue in many industrial operations. RUL forecasts allow maintenance to be scheduled during planned outages, maximizing production hours.

Optimize MRO & Inventory Costs: Instead of stocking spare parts based on conservative schedules ("just in case"), you can order them based on data-driven forecasts ("just in time"), reducing capital tied up in inventory.

Shift from Reactive to Proactive Operations: Move away from running equipment to failure (reactive) or replacing parts that are still healthy (preventive). Prognostics enables replacing components only when their data indicates they need to be.

Enhance Safety and Reliability: By identifying at-risk assets long before failure, you can mitigate safety hazards and improve the overall reliability of your entire operation.

Enable Data-Driven Capital Planning: Accurate RUL forecasts for a fleet of assets provide a clear roadmap for future capital expenditures, allowing for more strategic and predictable budgeting.

2. The AI Toolkit: Anomaly, Diagnosis, and Prognosis

To achieve these goals, we use a hierarchy of AI models. It's crucial to understand the role of each.

3. The Core Challenge: Why Prognostics is Hard

This section details the challenges presented in the seminar slide.

Challenges in Prognosis

Uncertainty in the degradation model

- Models are developed in idealized conditions with many assumptions.

- Dependency of model parameters on material properties.

- Changing physics of degradation depending on the state of wear.

Compromise between accuracy and computational complexity

- Model-based methods

- Handles Gaussian / non-Gaussian signals.

- Accurate with high computational complexity.

- Data-driven methods

- Extract useful features from collected data.

- Accuracy highly depends on the quality and quantity of data.

4. RUL Methodologies: Choosing Your Approach

The right RUL model depends on your data, your understanding of the asset, and your business goals.

This approach uses engineering and physics principles to create a mathematical model of degradation.

How it Works: You create a formula that describes how a component wears out based on physical laws. For example, a formula for how a metal crack grows with each stress cycle. The RUL is the time it takes for the calculated degradation to reach a critical failure point.

Data Needed:

- Physical parameters of the asset (material properties, geometry).

- Real-time operational data (loads, temperatures, stress cycles).

Example: Modeling the RUL of a turbine blade. You use the Paris Law equation, which describes fatigue crack growth. You feed the model the number of flight cycles (stress) and material constants to calculate the current crack size and predict when it will reach a critical length.

Common Techniques: Paris Law (fatigue), Arrhenius Equation (thermal degradation), corrosion models.

Strengths:

- Excellent for new assets with no historical failure data.

- Highly interpretable and based on proven engineering principles.

Weaknesses:

- Models are often idealized and can't capture all real-world complexities.

- Does not easily adapt or learn from an asset's unique operational history.

This approach uses historical data to learn degradation patterns without explicit knowledge of the underlying physics.

A) Survival Models

How it Works: Uses statistical analysis on historical failure times to determine the probability of survival. It answers: "Given that this pump has run for 3,000 hours, what is the probability it will fail in the next 100 hours?"

Data Needed: A simple list of lifespans from a large population of similar components (e.g., "Pump A failed at 4,100 hrs, Pump B at 3,850 hrs...").

Example: Analyzing a fleet of 500 identical water pumps. By plotting the failure times, you can fit a Weibull distribution to the data. This distribution can then predict the failure probability for any pump at any point in its life.

B) Similarity Models

How it Works: This is a "look-alike" approach. It finds assets from your historical data that have degradation profiles most similar to your current asset. The known lifespans of these "twins" are then used to forecast the RUL of your asset.

Data Needed: A rich dataset of complete run-to-failure sensor histories from many similar assets.

Example: Predicting the RUL of a specific aircraft engine. The model analyzes its last 20 flights and finds 10 historical engines from the fleet whose sensor data (temperature, pressure, etc.) trended in a very similar way. It then uses the known failure times of those 10 engines to create a probabilistic RUL forecast.

Common Techniques: k-Nearest Neighbors (k-NN), Dynamic Time Warping (DTW), LSTMs.

Comparison: Survival vs Similarity Models

Survival Models:

- Strengths: Minimal data requirements, statistically robust for large populations

- Weaknesses: Not personalized, cannot incorporate real-time sensor data

Similarity Models:

- Strengths: Can be extremely accurate with high-quality historical datasets

- Weaknesses: Requires rare and expensive run-to-failure data

This is the state-of-the-art approach that fuses the first two methods, creating a Probabilistic Digital Twin—a living, self-correcting model of your asset's health.

What is a Probabilistic Digital Twin? It's not just a 3D CAD model. It is a simulation model of the asset's degradation that is continuously updated with real-time sensor data. Its output is not a single number, but a probability distribution of the asset's health, representing the true uncertainty of the system.

How it Works:

- It starts with a Model-Based (Physics) understanding of how the asset should degrade (the "prior belief").

- It uses a sophisticated algorithm to confront this belief with incoming Data-Driven evidence from sensors.

- The model continuously self-corrects, updating its belief to create a realistic, personalized forecast.

The Engine Behind It:

- Kalman Filters: An older, powerful technique best suited for systems that degrade in a linear fashion.

- Particle Filters: A more modern and flexible algorithm that can handle highly complex, non-linear degradation. The "cloud of particles" represents the probability distribution of the digital twin's health.

Example: Our 10-year-old motor with a 13-year OEM lifespan and one year of data. The Particle Filter starts with the 13-year curve as its belief. It then uses the last year of sensor data as evidence. If the data is clean ("easy life"), the model self-corrects and predicts a lifespan longer than 13 years. If the data is poor ("hard life"), it self-corrects and predicts a much shorter RUL.

Strengths:

- The most robust and adaptable approach.

- Perfect for critical, single assets and for late-life assets with incomplete data, as it can infer the current health without needing the full history.

5. Supporting Research and References

- Lv, Y., Zheng, P., et al. (2023). A Predictive Maintenance Strategy for Multi-Component Systems...

- Relevance: Details a hybrid approach using Particle Filters to combine a degradation model with sensor data for RUL prediction. Validates the core methodology for handling incomplete data.

- Link

- Si, X. S., et al. (2011). Remaining useful life estimation–A review on the statistical data driven approaches. European Journal of Operational Research.

- Relevance: A foundational review paper that provides a deep dive into the various data-driven methodologies (Survival, Similarity, and Degradation models).

- Link

- Arulampalam, M. S., et al. (2002). A tutorial on particle filters for online nonlinear/non-Gaussian Bayesian tracking. IEEE Transactions on Signal Processing.

- Relevance: The cornerstone academic tutorial on the theory of Particle Filters, explaining why this algorithm is so powerful for solving non-linear tracking problems like prognostics.

- Link

Related articles

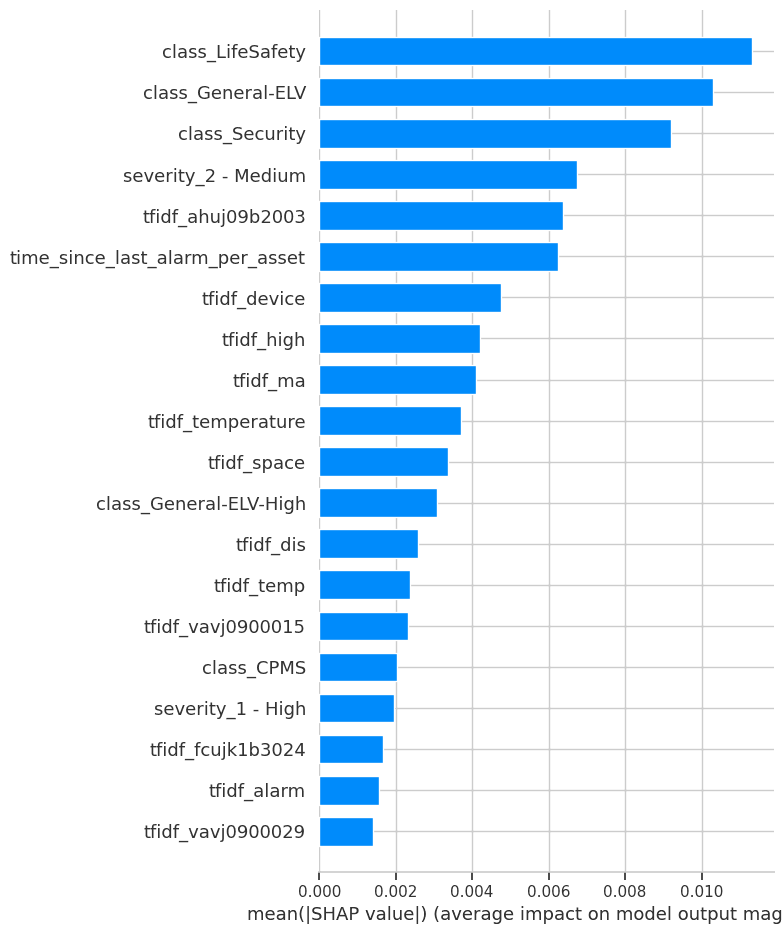

Project Report: Symptomatic Alarm Pattern Discovery and Root Cause Analysis

A formal summary of Project ID a496e3ae-5149-4a15-86dd-a3aee47f493f. This report details the full execution of the project, from the analysis of 102,319 alarm records to the development of an analytical pipeline for anomaly detection and the strategic vision for future capabilities.

Beyond the OEM Manual: A Self-Correcting AI for Real-World RUL

An OEM manual says your motor lasts 13 years. But has it had an easy life or a hard one? This report details a modern, self-correcting AI strategy that doesn't need the full history. Discover how a hybrid model uses live sensor data to uncover an asset's true health and deliver a RUL forecast that adapts to the real world.

Ready to get started with ML4Industry?

Discover how our machine learning solutions can help your business decode complex machine data and improve operational efficiency.

Get in touch